Professional Services

Custom Software

Managed Hosting

System Administration

See my CV here.

Send inquiries here.

Open Source:

tCMS

trog-provisioner

Playwright for Perl

Selenium::Client

Audit::Log

rprove

Net::Openssh::More

cPanel & WHM Plugins:

Better Postgres for cPanel

cPanel iContact Plugins

Since I posted about the resignation of SAWYERX, even more TPF members have thrown in the towel. This doesn't shock me at all, as the responses to my prior post made it clear the vast majority of programmers out there are incapable of seeing past their emotional blinders in a way that works for them.

The latest round of resignations comes in the wake of the decision to ban MST. The Iron Law of Prohibition still holds true on the internet in the form of the Streisand Effect, so it's not shocking that this resulted in him getting more press than ever. See above Image.

I'll say it again, knowing none will listen. There's a reason hellbans exist. The only sanction that exists in an attention economy such as mailing lists, message boards and chatrooms is to cease responding to agitators. Instead P5P continues to reward bad behavior and punish good behavior as a result of their emotional need to crusade against "toxicity". Feed trolls, get trolls.

This is why I've only ever lurked P5P, as they've been lolcows as long as I've used perl. This is the norm with programmers, as being right and happy is the same thing when dealing with computers. You usually have to choose one or the other when dealing with other people, and lots of programmers have trouble with that context switch.

So why am I responding to this now? For the same reason these resignations are happening. It's not actually about the issue, but a way to get attention raise awareness about important issues. Otherwise typing out drivel like this feels like a waste of time next to all those open issues sitting on my tracker. This whole working on computers thing sure is emotionally exhausting!

The big tempest in a teapot for perl these days is whether OVID's new Class and Object system Corinna should be mainlined. A prototype implementation, Object::Pad has come out trying to implement the already fleshed out specification. Predictably, resistance to the idea has already come out of the woodwork as one should expect with any large change. Everyone out there who is invested in a different paradigm will find every possible reason to find weakness in the new idea.

In this situation, the degree to which the idea is fleshed out and articulated works against merging the change as it becomes little more than a huge attack surface. When the gatekeepers see enough things which they don't like, they simply reject the plan in toto, even if all their concerns can be addressed.

Playing your cards close to your chest and letting people "think it was their idea" when they give the sort of feedback you expect is the way to go. With every step, they are consulted and they emotionally invest in the concept bit by bit. This is in contrast to "Design by Committee" where there is no firm idea where to go in the first place, and the discussion goes off the rails due to nobody steering the ship. Nevertheless, people still invest; many absolute stinkers we deal with today are a result of this.

The point I'm trying to make here is that understanding how people emotionally invest in concepts is what actually matters for shipping software. The technical merits don't. This is what the famous Worse Is Better essay arrived at empirically without understanding this was simply a specific case of a general human phenomenon.

Reading Mark Gardner's latest post on what's "coming soon" regarding OO and perl has made me actually think for once about objects, which I generally try to avoid. I've posted a few times before that about the only thing I want regarding a new object model is for P5P to make up it's mind already. I didn't exactly have a concrete pain point to give me cause to say "gimme" now. Ask, and ye shall receive.

I recently had an issue come down the pipe at playwright-perl. For those of you not familiar, I designed the module for ease of maintenance. The way this was accomplished is to parse a spec document, and then build the classes dynamically using Sub::Install. The significant wrinkle here is that I chose to have the playwright server provide this specification. This means that it was more practical to simply move this class/method creation to runtime rather than in a BEGIN block. Running subprocesses in BEGIN blocks is not usually something I would consider (recovered memory of ritual abuse at the hands of perlcc).

Anyways, I have a couple of options to fix the reporter's inability to subclass/wrap the Playwright child classes:

On the other hand, if we had a good "default mop" like Mark discusses, this would be a non-issue given we'd already get everything we want out of bless (or the successor equivalent). It made me realize that we could have our cake and eat it in this regard by just having a third argument to bless (what MOP to use).

perl being what it is though, I am sure there are people who are in "bless is bad and should go away" gang. In which case all I can ask is that whatever comes along accommodates the sort of crazed runtime shenanigans that make me enjoy using perl. In the meantime I'm going back to compile-time metaprogramming.

Over the years I've had discussions over the merit of various forms of dependency resolution and people tend to fall firmly into one camp or the other. I have found what is best is very much dependent on the situation; dogmatic adherence to one model or another will eventually handicap you as a programmer. Today I ran into yet another example of this in the normal course of maintaining playwright-perl.

For those of you unfamiliar, there are two major types of

dependency resolution:

Dynamic Linking: Everything uses global deps unless explicitly

overridden with something like LD_LIBRARY_PATH at runtime.

This has security and robustness implications as it means the

program author has no control over the libraries used to build their

program.

That said, it results in the smallest size of shipped programs and

is the most efficient way to share memory among programs.

Given both these resources were scarce in the past, this became the

dominant paradigm over the years.

As such it is still the norm when using systems programming

languages (save for some OSes and utilities which prefer static

linking).

Static Linking: essentially a fat-packing of all dependencies into

your binary (or library).

The author (actually whoever compiles it) has full control over the

version of bundled dependencies.

This is highly wasteful of both memory and disk space, but has the

benefit of working on anything which can run its' binaries.

The security concern here is baking in obsolete and vulnerable

dependencies.

Examples of this approach would be most vendor install mechanisms,

such as npm, java jars and many others.

This has become popular as of late due to it being simplest to

deploy.

Bootstrapping programs are almost always using this technique.

There is yet another way, and this is to "have our cake and eat it

too" approach most well known in windows DLLs.

You can have shared libraries which expose every version ever

shipped such that applications can have the same kind of simple

deploys as with static linking.

The memory tradeoffs here are not terrible, as only the versions

used are loaded into memory, but you pay the worst disk cost

possible.

Modern packaging systems get around this by simply keeping the

needed versions online, to be downloaded upon demand.

Anyways, perl is a product of it's time and as such takes the

Dynamic approach by default, which is to install copies of its'

libraries system-wide.

That said, it's kept up with the times so vendor installs are

possible now with most modules which do not write their makefiles

manually.

Anyhow, this results in complications when you have

multi-programming language projects, such as playwright-perl.

Playwright is a web automation toolkit written in node.js, and so we

will have to do some level of validation to ensure our node kit is

correct before the perl interface can work.

This is further complicated by the fact that playwright also has

other dependencies on both browsers and OS packages which must be

installed.

Normally in a node project you could use webpack to fat-pack (statically

link) to all your dependencies.

That said, packing entire browser binaries is a bridge too far, so

we either have to instruct the user properly or install everything

for them.

As is usual with my projects, I bit off a bit more than I can chew

trying to be good to users, and made attempts to install the

dependencies for them.

Needless to say, I have ceased doing so. Looking back, this

willingness to please has caused more of my bugs than any other.

Yet again the unix philosophy wins; do one thing, do it well.

This is also a big reason why dynamic linking won -- it makes a lot

of things "not your problem".

Resolving dependencies and installing them are two entirely separate

categories of problem, and a program only has to solve one to run.

The long-term solution here is to wait until playwright can be

shipped as an OS package, as many perl libraries are nowadays.

It's interesting that playwright itself made an install-deps

subcommand.

I hope this means that is in the cards soon, as that's most of the

heavy lifting for building OS packages.

The way software testing as a job is formally described is to provide information to decisionmakers so that they can make better decisions. Testers are fundamentally adversarial as they are essentially an auditor sent by management to evaluate whether the product an internal team produces is worth buying.

Things don't usually work out this way. The job is actually quite different in practice from it's (aspirational) self-image. It turns out that the reason testers are not paid well and generally looked down upon in the industry is because of this reality. This is due primarily to the organizational realities of the modern corporation, and is reinforced by various macroeconomic and policy factors. Most of these situational realities are ultimately caused by deeply ingrained emotional needs of our species.

Adversarial processes are not morally or ethically wrong. It is in fact quite useful to take an adversarial approach. For example, AI researchers have found that the only reliable process to distinguish lies from truth in a dataset is precisely through adversarial procedure. However, the usefulness of an adversarial approach is compromised when a conflict of interests exists. This is why Judges recuse themselves from trials in which they even have the appearance of outcome dependence in.

Herein lies the rub. Modern software firms tend to be a paranoid lot, as their (generally untalented and ignorant) management don't understand their software is in no way unique. They seem to act like gluing together 80% open source components is somehow innovation rather than obvious ideas with good marketing. In any case, because of this paranoia they don't want to expose their pile of "innovation" and it's associated dirty laundry to the general public via leaks and so forth. They mistakenly believe that they can secure this most reliably with direct employment of testers rather than being careful with their contractors.

This forgets that the individual employee usually has nothing whatsoever that could be meaningfully recovered in the event of such a breach, and is practically never bonded against this. On the other hand, a contracting business stakes everything on their professionalism and adherence to contract and have far more to lose than a tester paid peanuts. This lead me to the inescapable conclusion: The incentives encouraged for the vast majority of employed testers are the opposite of what is required to achieve the QA mission.

It turns out this happens for the same reason that Judges (paid by the state) don't recuse them from judgement in cases wheir their employer is the defendant or prosecutor. Because the job is not actually what it is claimed to be.

As if to rub this cognitive dissonance further in the face of the tester, modern organizations tend to break into "teams" and adopt methodologies such as scrum to tightly integrate the product lifecycle. Which means you as a tester now have to show solidarity and be a team player who builds others up instead of tearing down their work. To not do so is to risk being considered toxic.

The only way to actually do this is to prevent issues before they happen, which to be entirely fair is the cheapest point to do so. The problem of course with this is that it means in practice the programmer is basically doing Extreme Programming and riding shotgun with a tester. If the tester actually can do this without making the programmer want to strangle them, this means the tester has to understand programming quite well themselves. Which begs the question as to why they are wasting time making peanuts testing software instead of writing execrable piles of it. I've been there, and chose to write and make more every single time.

Everyone who remains a tester but not programmer is forced to wait until code is committed and pushed to begin testing. At that point it's too late from an emotional point of view; it's literally in the word -- commitment means they've emotionally invested in the code. So now the tester is the "bad guy" slipping schedule and being a cost center rather than the helper of the team. That is, unless the tester does something other than invalidate (mistaken) assumptions about the work product's fitness for purpose. Namely, they start validating the work product (and the team members personally by extension), emphasizing what passed rather than failed.

This is only the beginning of the emotional nonsense getting in the way of proper testing. Regardless of whether the customer wants "Mr. Right" or "Mr. Right Now", firms and their employees tend to have an aspirational self-image that they are making the best widget. The customer's reality is usually some variant of "yeah, it's junk but it does X I want at the price I want and nobody else does". I can count on one hand the software packages that I can say without qualification are superior to their competition, and chances are good that "you ain't it".

Neverthless, this results in a level of "Quality theatre" where a great deal more scrutiny and rigor is requested than is required for proper and prompt decisionmaking. This is not opposed by most QAs, as they don't see beyond their own corn-pone. However, this means that some things which require greater scrutiny will not recieve them, as resources are scarce.

Many testers also fall into this validation trap, and provide details which management regards as unimportant (but that the tester considers important). This causes managers to tune out, helping no one. This gets especially pernicious when dealing with those incapable of understanding second and third order effects, especially when these may lead to material harm to customers. When time is a critical factor it can be extremely frustrating to explain such things to people. So much so that sometimes you just gotta say It's got electrolytes.

Sometimes the business model depends on the management not understanding such harms. At some point you will have to decide whether your professional dignity is more important than your corn pone, as touching such topics is a third rail I and others have been fried on. I always choose dignity for the simple reason that customers who expose themselves to such harms willingly tend to be ignorant. Stupid customers don't have any money.

It's all these emotional pitfalls that explain why testers still cling to the description of their job as providing actionable information to decisionmakers. The only way to maintain your professional dignity in an environment where you can do everything right but still have it all go wrong is to divorce yourself from outcomes. Pearl divers don't really care if they sell to swine, you know?

Speaking of things outside of your control and higher-order effects, there are also market realities which must guide the tester as to what decisionmakers want to know, but will never tell you to look for. Traditionally this was done by business analysts who would analyze the competitve landscape in cooperation with testers, but that sounds a bit too IBM to most people so they don't do it. As such this is yet another task which it turns out you as a tester have to do more often than not. It is because of this that I developed a keen interest in business analysis and Economics.

The Iron Law of Project Management states that you have to pick two of three from:

That said the people who want to hire testers are looking to transition from fast/cheap to fast/well by throwing money at the problem. This tends to run into trouble in practice, as many firms transition into competing based on quality too early, eating the margins beyond what the market and investors will bear.

The primary reasons for these malinvestments are interest rate suppression and corrupt units of account being the norm in developed economies. This sends false signals to management as to the desirability of investing in quality, which are then reinforced by the emotional factors mentioned earlier. Something will have to give eventually in such a situation and by and large it's been tester salaries, as with all other jobs that are amenable to outsourcing to low-cost jurisdictions. Many times firms have a bad experience outsourcing, and this is because they don't transfer product and business expertise to the testers they contract with first. There is no magic bullet there, it's gotta be sour mash to some degree. Expertise and tradition take people and time to build.

Also, price is subjective and it's discovery is in many ways a mystical experience clouded by incomplete knowledge in the first place. It should be unsurprising that prices are subjective given quality itself is an ordinal and not cardinal concept. The primary means by which price is discovered is customer evaluation of a product and its seller's qualitative attributes versus how much both parties value the payment.

This ultimately means that to be an effective tester, you have to think like the customer and the entrepeneur. Being able to see an issue from multiple perspectives is very important to writing useful reports. This is yet another reason to avoid getting "too friendly with the natives" in your software development organization.

Speaking of mystical experiences, why do we care about quality at all? Quality is an important component of a brand's prestige, which is the primary subjective evaluation criteria for products. Many times there are sub-optimal things which can be done right, but only at prohibitive costs. Only luxury brands even dare to attempt these things.

Those of us mere mortals will have to settle for magic. In the field of Carpentry there's an old adage "If you can't conceal it, reveal it. Perfect joinery of trim to things like doorframes is not actually possible because wood doesn't work that way. So instead you offset it a bit. This allows light and shadow to play off it, turning a defect into decoration.

The best way to describe this in software terms is the load bearing bug. Any time you touch things for which customer expectations have solidified, even to fix something bad, expect to actually make it worse. This is because nobody ever reads change logs, much less writes them correctly. You generally end up in situations where data loss happens because something which used to hold up the process at a pain point has now been shaved off without the mitigant being updated. Many times this just means an error now sails through totally undetected, causing damage.

These higher-order effects mean in general that "fixing it" is many times not the right answer. Like with carpentry you have to figure out a way to build atop a flawed reality to result in a better finished product. This eventually results in large inefficiencies in software ecosystems and is a necessary business reality. The only way to avoid this requires great care and cleverness with design which many times is simply not feasible in the time allotted.

Assisting in the identification of these sort of factors is a large part of "finding bugs before they happen" especially in the maintenance cycle. It's also key to the brand, as quality is really just a proxy for competence. Pushing fixes that break other things does not build such a reputation, and should not be recommended save in the situation where data loss and damage is the alternative.

The only way to actually have this competence is to not have high turnover in your test and development department. Unfortunately, the going rate for testers makes the likelihood that skilled testers stick around quite low.

I have spoken at length about the difference between Process and Mission-Driven organizations. To summarize, Mission-driven organizations tend to emphasize accomplishment over how it is achieved, while process oriented organizations do the opposite. Mission driven organizations tend to be the most effective, but can also do great evil in being sloppy with their chosen means. Process driven organizations tend to be the most stable, but also forget their purpose and do great evil by crowding out innovation. What seems to work best is process at the tactical level, but mission at the strategic and operational/logistical level.

The in-practice organizational structure of the firm largely determines whether they embrace process or mission at scales in which this is inappropriate. Generally, strong top-down authority will result in far more mission focus, while bottom-up consensus-based authority tends to result in process focus. Modern bureaucracies and public firms tend to be the latter, while private firms and startups tend to be the former. This transition from mission to process focus at the strategic and operational level is generally a coping mechanism for scaling past dunbar's number.

In the grips of this transformation is usually when "middle management" and "human resources" personell are picked up by a firm. Authority over the worker is separated from authority over product outcomes, leading to perverse incentives and unclear loyalties. As a QA engineer, it is unclear who truly has the final say (and thus should recieve your information). Furthermore, it is not clear to the management either, and jockeying for relative authority is common.

The practical outcome of both this is that it's not clear who is the wringable neck for any given project. The personell manager will generally be unininterested in the details beyond "go or no go", as to go any further might result in them taking on responsibility which is not really theirs. Meanwhile, the project manager will not have the authority to make things happen, and so you can tell them everything they should know but it will have no practical impact. As such, the critical decisionmaking loop of which QA is a critical component is broken, and accomplishing the mission is no longer possible. The situation degenerates into mere quality control (following procedure), as that's the only thing left that can be done. For more information on this subject, consider the 1988 book "Moral Mazes: The world of corporate managers".

To actually soldier on and try to make it work simply means the QA is placing responsibility for the project upon their own head with no real authority over whether it succeeds or fails. Only a lunatic with no instinct for self-preservation would continue doing this for long (ask me how I know!), and truly pathological solutions to organizational problems result from this. It's rare to see an organization recognize this and allow QA to "be the bad guy" here as a sort of kayfabe to cope with this organizational reality. IBM was one of the first to do it, and many other organizations have done it since; this is most common amongst the security testing field. The only other (pathological) thing that works looks so much like a guerrilla insurgency for quality that it inevitably disturbs the management to the point that heads roll.

Ultimately, integrating test too tightly with development is a mistake. Rather than try to shoehorn a job which must at some level be invalidational and adversarial into a team-based process, a more arms-length relationship is warranted.

Testing is actually the easy part. Making any of it matter is the hard part.

I saw a good article come over the wire: The Perl Echo Chamber: Is perl really dying? Friends, it's worse. The perl we knew and love is already dead because the industry it grew up with is too...mature. The days of us living on the edge are over forever.

One passage in the linked article gets to half of the truth:

my conclusion is that it’s the libraries and the ecosystem that drive language use, and not the language itself.

When was the last time you thought about making an innovative new hammer or working for a roofing company? I thought not. What I am saying is that when the industry for which a toolset is primarily associated with becomes saturated, innovation will die because at some point it's good e-damn 'nough.

The web years were a hell of a rush and much like the early oil industry, the policy was drill, baby drill. Someday you run out of productive wells and new drilling tech just isn't worth developing for a long, long time. We're here. The fact that there are more web control panels, CMSes and virtualization options than you can shake a stick at is testament to this fact.

As they say, the cure to low prices is low prices and vice versa. Given enough time, web expertise will actually be lost, much like carpentry is in the US market (because it just doesn't pay.) The need for it won't, so what we can expect the future to look like is less "bigco makes thing" than "artisan programmer cleans out rot and keeps building standing another 20 years".

Similarly, innovation doesn't totally die, it just slows down. Hell, I didn't know you could do in-place plunge cuts using an oscillating saw growing up doing lots of carpentry, but now it's commonplace. Programming Languages, Libraries, databases...they're just another tool in the bag. I'm not gonna cry over whether it's a Makita or a DeWalt.

Get over it, we're plumbers now. Who cares if your spanner doesn't change in a century. If you want to work on the bleeding edge, learn Python and Data Science or whatever they program robots with because that's what there's demand for.

A bizarre phenomenon in modern Corporations is what is generally referred to as "Playing House"; this would have been referred to in earlier times as "putting on airs". You may have noticed I've mentioned this in multiple of my earlier videos.

Generally this will mean things like Advertising how amazing your company is rather than the utility or desirability of your products. When I was young my father would point out such things to me; he generally considered it a sign of weakness in a firm, as it meant they didn't have any better ideas on what to advertise. While this is indeed true, it's not because they have no good ideas but because their idea is to sell the company.

In earlier times before Social Media, it was generally only firms which wished to be bought (or were publicly traded) which engaged in this behavior. However, now that everything is branding, practically every company plays the game unless they are largely immune to market forces.

Even then, large hegemonic institutions still do this, as it tends to be the cheapest way to purchase influence and legitimacy. See the Postscript on the Societies of Control by Deleuze for why this is. You see ridiculosity such as videos of soldiers dancing the macarena with the hapless peasants whose countries they occupy because of this.

Reinforcing that trend is that the corporate environment is almost always the same as the large hegemonic institutions, just in miniature. They tend to "ape their betters" as yet another way of demonstrating higher value.

However, "Clout Chasing" (also known as the "maximizing reach" marketing model -- see Rory Sutherland on this here) has largely reached the point of diminishing returns. This model is generally feast-or-famine; Understanding this makes you realize why the FAANG stocks are nearly a fifth of the S&P market cap. Yet many continue to willingly barrel into a game with an obvious pareto distribution without the slightest clue "what it takes" to actually enter that rarified stratosphere.

So, they make the same mistake of so many others by thinking "oh, I'll just look like the top 20% and it'll work, right?" Few understand that luxury brands are built by being different from everything else, which also means your company culture has to be different too. So, they hire an army of "Scrum Consultants", "Facilitators" and Managers to fit their dollop of the workforce into Procrustes' Bed. Millions of wasted hours in meetings and "Team Building" exercises later, the ownership starts wondering why it is their margins keep going down and that they have trouble retaining top talent.

It's the same story in the Hegemonic Institutions; Muth's Command Culture describes in great detail how the US Army (and eventually the Prussians too!) made the mistake of thinking the structure of the Prussian military was why it won, when in reality it was their highly skilled people and a lack of organizational barriers to their pursuit of success. The Map is not the territory, as they say.

All institutions are only as successful and righteous as their members can allow them to be. Most skilled people rarely consider exit until institutional barriers prove to hinder their ability to excel (which is freedom -- the ability to self-actualize). The trouble is that optimizing for Clout and Reach means that all controversial thought must be purged, as Guilt by Association is considered a lethal risk to your reach.

In reality it actually isn't -- "Love me, Hate me, but Don't Forget me" is actually the maxim which should be adopted; Oracle would have long since shuttered if having a negative reputation had anything to do with your reach. Being a source of Indignation is as effective as being a source of Comfort to build reach.

However this is not a common strategy due to the fact that most employees don't want to feel like they work for a devil. So, we instead get this elaborate maze of mission statements, team building and "CULTure" to brainwash the drones into believing whatever the company does is the "right thing" TM. Any employees who look at things objectively are demonized as being "Toxic" and "Negative" and ultimately pushed out.

The trouble with this is that the most effective people only get that way by seeing the world for what it is, and then navigating appropriately. When put into an environment where they know they cannot freely express themselves this is a high default level of friction that means they'll be ready to bolt or blow up over minor things. It takes but a straw to overburden a person walking upon eggshells.

From the perspective of the effective worker, most corporate simply looks like a crab bucket. Any person who tries to excel (make it to the top of the bucket and possibly out) is immediately grabbed by the other crabs and forced back down into their little hell. This is horrific for productivity, as it means the organization is not pulling forward, but in all directions (which means they are effectively adrift).

Which brings us back to the whole "lack of better ideas than getting sold" thing. This condition is actually tolerable when attempting to sell a firm; prospective buyers rarely care about the opinions or conditions of the line workers. But with the advent of this being 24/7 365 for nearly all firms and institutions this stultification has become inescapable save for under the ministrations of "Turnaround Capital". These firms buy cheap and hated firms, stop playing house and start making money by unleashing their people to be productive. They don't have to resort to the usual nonsense because turning a failure into a success is enough of a demonstration of higher value.

Given enough time though, all companies have their growth level off and become boring. This is when these veblenesque measures take hold, as familiarity is lethal to attraction (building that is the essence of sales). It's like secondary sex characteristics for figments of our imagination which are legally people.

It's that last bit that's where the rub lies and why it all goes so wrong. The Principal-Agent problem is fundamentally why the people running firms end up screwing up their charge worse than child beauty pageant contestants. The whole reputational protection of the employees (need to see themselves as good) referred to earlier is one such case.

Here are a few others (list is by no means complete):

Stock Buybacks:

If the argument used that "there is no better use of our money than to speculate in our own stock" (WOW what a flex) were actually true,

they'd issue a dividend and tell their shareholders as much.

The fact that this is not what is done tells you most of what you want to know.

The reality has to do with an executive team's compensation tied to share price.

Shiny new Office:

While workers tend to expect a minimum level of comfort, the level required for high performance to take hold is far lower than believed.

Repeated study has shown there to be little difference in productivity for nonwork facilities such as cafeterias, break rooms, gyms and game rooms.

The actual reason is that the agents want more a social club, and to attract different types of (read: nonproductive) workers, as essentially paid friends.

Core Values, COCs, etc

It is worth noting that the pursuit of profit or regard for shareholders and customers is almost never in any of these statements.

Rather than assume you are working with adults who can figure their intrapersonal problems out, let us build a procrustes' bed so that we'll never have any problems!

This kind of contract to adhere to vague values (which frequently contradict religious convictions and are immaterial to the work) can never be seen as anything but an unconscionable contract of adhesion.

It is very similar to the ridiculous "Covert Contract" concept (If I do X in Y emotional relationship it will be trouble free), and nobody is shocked at it's repeated failure. Nevertheless endless amounts of time is wasted on nonsense like this which ultimately serves only as more roadblocks to productivity (and thus impetus for the productive to exit). Similarly, many lower status employees use it as a club against higher status ones; this is the essence of the crab bucket.

For example, a common theme in CoCs & "Values" statements is that colleagues must be shown "respect" of which the level is never clearly defined. It then becomes a mystery why bad ideas proliferate as "well golly gee we gotta respect everyone" regardless of whether such respect is merited or not.

This sort of application of the "reach" marketing philosophy (I have a hammer, everything is a nail) is especially ridiculous here. Let us be so open minded our brains fall right out. Let us be so approachable that we get mugged in broad daylight. Let us kowtow to our agents (especially the lawyers) such that our shareholders are ruined.

Were people rational about these things, they would simply insure employees against lawsuits resulting from interpersonal conflicts and move on. It'd be a lot cheaper than letting lawyers strangle your corporation with rules. It would also still weed out the truly troublesome via excessive premium increases. However, that acknowledges human nature and imperfection, which is not how the agents want to see themselves -- they are all angels, and like to virtue-signal. Management sees all this money they are "saving" avoiding lawsuits but forget the money left on the table by the talent which left or was forced out.

Ultimately all these statements of virtue are actually signals of luxury and lesiure (abundance). People in conditions of scarcity cannot afford to let principle get in the way of profit. Which makes many of these things emanating from firms with high Debt to Income ratios, triple digit P/Es and yield below 1% seem quite bizarre.

There are a few other dubiously useful things like offsite meetings and dinners, but that can be reasonably justified as compensation (but that avoids taxation, yay). The most complete picture of the situation was written when I was but four years old: The Suicidal Corporation. Give it a gander when you get a chance.

Knowing all this, what then shall we do? By and large, the productive have chosen exit or been forced into entrepeneurship. The whole "rise of the hustle economy" is pretty much exactly this. Instead of the corporate strategy of maximizing reach, they instead maximize engagement. Which is a hell of a lot more effective in the long run.

Which means that as a high-talent person you too have to play the "Demonstrating Higher Value" game, rather than be able to toil and build wealth in obscurity. So rather than lament the situation I have decided instead to embrace it. In retrospect, it is really the only path left for growth as I have more or less reached the highest echelons of compensation as a software developer for my market already.

I have to have a solution for every major OS, as this is a

necessity for testing software properly.

On Linux I can pretty much have everything I need on one to N+1

virtual desktops, where N is the number of browsers I need to

support, plus one window for doing documentation lookups (usually

on a second monitor). I do most of my development on linux

for precisely these reasons.

This mostly works the same on OSX, however they break immersion

badly for people like me by having:

They seemed to have optimized for the user which Alt+Tabs between

700 open windows in one workspace, which is a good way to break

flow with too much clutter.

On windows VSCode has a good shortcut to summon the console, and

a vim plugin that works great. Windows virtual desktops also

work correctly, and I can configure shortcuts on linux to match

both it and the console summon. I would prefer it be the

other way around, but commercial OS interface programmers tend to

either be on a power trip or too afraid of edge cases to allow

user choice. If I could have a tmux-like shortcut to page

between vscode workspaces like I do panes I'd be a happier camper,

as I wouldn't need as many virtual desktops. This is

apparently their most popular

feature request right now, go figure.

Beyond the little hacks you do to increase flow, mitigating the

impact of interruptions is important. This is a bit more

difficult as much of this is social hacking, and understanding

human nature. The spread of computing into the general

population has also complicated this as the communication style of

the old order of programmers and engineers is radically different

from the communication norms of greater society. I, along

with much of the rest of my peers, are still having a difficult

time adjusting to this.

The preferred means of communication

for engineers is information-primary rather than

emotion-primary. The goal of communication is to convey

information more than feeling (if that is communicated at

all). The majority of society does not communicate in this

fashion. Instead they communicate Feelings above all else

and Information is of secondary (if any) importance. For

these people, their resorting to communication of information

is a sign of extreme frustration that you have not yet

discerned their emotional message and provided the validation they

are seeking. If they haven't already lashed

out, they are likely on the way to doing so.

It is unfortunate to deal with people operating via a juvenile

and emotional communication style, but this is the norm in society

and over the last 20 years has entirely displaced the existing

engineering culture at every firm I've worked at. Code

reviews can no longer be blunt, as it is

unlikely you are dealing with someone who is not seeking

validation as part of their communication packets. The punky

elements of culture which used to build friendship now build

enmity (as you are dealing with the very "squares" nerd culture

embraced being hated by). Nevertheless, you still have to

walk in both worlds as there are still islands of other hackers

just like you. Taking on and off these masks is risky,

however, as people are quite hung up on the notion that people

must be 100% congruent with how they are perceived outwardly.

Everyone out there building an online reputation learns quickly

that courting controversy will get you far more engagement than if

you had made a program which cured eczema and allowed sustained

nuclear fusion. Emotional highs punctuated by warm fuzzies is

generally the sort of rollercoaster ride people are looking

for. Lacking better sources they will attempt to draw you

into their emotional world. Their world is a skinner box,

and if you enter it unawares they will train you.

They cannot help but be this way, so you must turn off the part

of your brain that looks for informational meaning in mouth

noises. Their utterances have little more significance than

the cries of a baby or yapping of a dog. The only thing that

matters is whether the behavior is something you wish to encourage

or discourage.

Operant conditioning is how you must regard your

interactions. When you validate them (usually with

attention), you encourage that behavior. Non-acknowledgement

of irritating behavior is often the most effective

discouragement. The natural urge to engage with any point of

fact must be resisted, and instead reserve your communications to

that which advances your purposes. When a minimum of

validation is demanded, dismissal via fogging, playing

dumb or broken-record technique should be engaged in, rather than

the hard no which is deserved. A satisfying response to a

demand can never be offered unless you wish to be dominated by

those around you. Many see this as Machiavellian

manipulation, but there is no choice. You play this game, or

get played.

Nevertheless, this all has a huge effect on the corporate

environment. Most firms devote far more energy to playing

house than pleasing customers and making money, and tech is no

exception. Fellow employees are far more likely to seek

validation on this schedule with each other than

customers. Most satisfy themselves with a stable IV drip of

validation from their local group rather than experiencing the

much more rewarding experience of solving customer problems.

This should come as no shock, given social media is purpose built

to inculcate this mental model, as it was found to maximize

engagement. This is also good at building solidarity at a

firm but unfortunately comes at the expense of crowding-out

emotional investment in the customer who cannot and should not

have so tight an OODA loop with their vendors.

You break out of your loop when you stop getting meaningful

observations. Many organizations have successfully adopted

this (see OPCDA). The

whole point here is that you accumulate less bad designs

lurking in your code, as you can refine constraints quickly enough

to not over-invest in any particular solution.

For those of you not familiar with me, I have a decade of

experience automating QA processes and testing in general.

This means that the vast majority of my selling has been of two

kinds:

That said, I also wore "all the hats" in my startup days at

hailstrike, and had to talk a customer down from bringing their

shotgun to our office.

I handled that one reasonably well, as the week beforehand I'd

read Carl Sewell's Customers

for Life and Harry Browne's Secret

of selling anything.

The problem was that one of the cronies of our conman CEO was a

sales cretin there and promised the customer a feature that didn't

exist and didn't give us a heads up.

It took me a bit to calm him down and assure him he was talking to

a person that could actually help him, but after that I found out

what motivated him and devised a much simpler way to get him what

he wanted.

A quick code change, a deploy and call back later to walk him

through a few things to do on his end to wrangle data in Excel and

we had a happy camper.

He had wanted a way to bulk import a number of addresses into our

systems and get a list of hailstorms which likely impacted the

address in question, and a link into our app which would pull the

storm map view immediately (that they could then do a 1-click

report generate for homeowners).

We had a straightforward way of doing this for one address at a

time, but I had recently completed optimizations that made it

feasible to do many as part of our project to generate reports up

to two years back for any address.

Our application was API driven and already had a means to process

batched requests, so it was a simple matter of building an excel

macro talking to our servers which he could plug his auth

credentials into.

I built this that afternoon and sent it his way. This

started a good email chain where we made it an official feature of

the application.

It took a bit longer to build this natively into our application,

but before the week was up I'd plumbed the same API calls up to

our UI and this feature was widely available to our customers.

I was also able to give a stern talking to our sales staff (and

gave them copies of C4L and SSS) which kept this from happening

going forward, but the company ultimately failed thanks to

aforementioned conman CEO looting the place.

After that experience I went back to being a salaryman over at

cPanel. There I focused mostly on selling productivity tools

internally until I transitioned into a development role.

I'd previously worked on a system we called "QAPortal" which was

essentially a testing focused virtual machine orchestration

service based on KVM. Most of the orchestration

services we take for granted today were in their infancy at

that time and just not stable or reliable enough to do the

job. Commercial options like CloudFormation or VSphere were

also quite young and expensive, so we got things done using perl,

libvirt and a webapp for a reasonable cost. It also had some

rudimentary test management features bolted on.

That said, it had serious shortcomings, and the system

essentially was unchanged for the 2 year hiatus I had over at

hailstrike as all the developers moved on to something else after

the sponsoring manager got axed due to his propensity to have

shouting matches with his peers.

I was quickly tasked with coming up with a replacement. The

department evaluated test management systems and eventually

settled on TestRail, which I promptly wrote the perl API client

for and put it on CPAN.

The hardware and virtual machine orchestration was replaced with

an openstack cluster, which I wrote an (internal) API library for.

I then extended the test runner `prove` to talk to and multiplex

it's argument list over the various machines we needed to

orchestrate and report results to our test management system.

All said, I replaced the old system within about 6 months.

If it were done today, it would have taken even less time thanks

to the advances in container orchestration which have happened in

the intervening time. The wide embrace of SOAs

has made life a lot better.

Now the team had the means to execute tests massively in parallel

across our needed configurations, but not every team member was

technical enough to manage this all straightforwardly from the

command line. They had become used to the old interface, so

in a couple of weekends I built some PHP scripts to wrap our apps

as an API service and threw up a jQuery frontend to monitor test

execution, manage VMs and handle a few other things the old system

also accomplished.

Feedback was a lot easier than with external customers, as my

fellow QAs were not shy about logging bugs and feature requests.

I suspect this is a lot of the reason why companies carefully cultivate alpha and beta testers from their early adopter group of rabid fans. Getting people in the "testing mode" is a careful art which I had to learn administering exploratory test sessions back at TI, and not to be discarded carelessly. That is essentially the core of the issue when it comes to getting valid reports back from customers. You have to do Carl Sewell's trick of asking "what could have worked better, what was annoying...", as those are the sort of user feedback that you want rather than flat-out bugs. Anything which breaks the customers' immersion in the product must be stamped out -- you always have to remember you are here to help the user, not irritate them.

Rewarding these users with status, swag and early access was the

most reliable way to weed out time-wasters; you only want people

willing to emotionally invest, and that means rewards have to

encourage deeper integration with the product and the

business. It also doesn't hurt that it's a lot cheaper and

easier to justify as expenses than bribes.

Measuring adoption of software and productivity ideas in general

can be tricky unless you have a way to either knock on the door or

phone home. Regardless of the approach taken, you also have to

track it going forwards, but thankfully software makes that part

easy nowadays.

Sometimes you use A/B tests and other standard conversion metrics,

as I used extensively back at HailStrike. I may have tested

as much copy as I did software! Truly the job is just

writing and selling when you get down to it.

In the case of inter-organization projects most of the time it's

literally knocking on the door and talking to someone. At

some level people are going to "buy" what you are doing, even if

it's just giving advice. This is nature's way of telling you

"do more of this, and less of the rest".

I can say with confidence that the best tool for the job when it

comes to storing this data is a search engine, as you eventually

want to look for patterns in "what worked and didn't".

Search engines and Key-Value stores give you more flexibility in

what IR

algorithm best matches the needs of the moment. I use

this trick with test data as well; all test management systems use

databases which tend to make building reports cumbersome.

Rather than flippantly dismiss the original question, I would

like to revisit the problem. While it is obvious that I will

probably gain more over the long term by sacrificing my desire to

do something fun instead of writing this article, one must also

take into consideration the law of diminishing

marginal utility and the Paradox of Value. Thinking

long term means nothing when one is insolvent or dead without

heirs tomorrow. There will always be an infinite number of

possible ends for which I sacrifice my finite means. As an

optimization problem, it is NP hard. The best we can do is

to use the Kelly

Criterion to distribute our time and other assets wisely

among the opportunities we best understand the risks about.

Building an online reputation is quite expensive and time

consuming, but is beginning to pay off. It doesn't hurt that

I'm pursuing multiple aims simultaneously (building a MicroISV

product, chasing contracts) with everything I write these

days. That said it cannot be denied that hanging out your

shingle is tantamount to a financial suicide mission without

multiple years of runway. Had I not spent my entire adult

life toiling, living below my means and not taking debts, none of

this would be possible. In many ways it's a lot like going

back to college, but the hard knocks I'm getting these days have

made me learn a whole lot more than a barrel full of professors.

For those who insist on the technical answer to this question, I

would direct you to observe the design of Selenium::Client

versus that of Selenium::Remote::Driver.

This is pretty much my signature case

for why picking a good design from the beginning and putting in

the initial effort to think is worth it. My go-to approach

with most big balls

of mud is to stop the bleeding with modular design.

Building standalone plugins that can ship by themselves was a very

effective approach at cPanel, and works very well when dealing

with Bad

and Right systems. What is a lot harder to deal with

is "Good and Wrong" systems, usually the result of creationist

production. When dealing with a program that puts users and

developers into Procrustes' bed

rather than conforming to their needs you usually have to start

back from 0. Ironically most such projects are the result of

the misguided decision to "rewrite it, but correctly this time".

Given cPanel at the time was a huge monorepo sort of personifying

"bad design, good execution", many "lets rewrite it, but right

this time" projects happened and failed, mostly due to having

forgotten the reasons it was written the way it had been in the

first place. New versions of user interfaces failed to

delight users thanks to removing features people didn't know were

used extensively or making things more difficult for users in the

name of "cleaner" and "industry standard" design. A lot of

pain can be brought to a firm when applying development standards

begins to override pleasing the customer. The necessity of

doing just that eventually resulted in breaking the monolith to

some extent, as building parallel distribution mechanisms was the

only means to escape "standardization" efforts which hindered

satisfying customer needs in a timely manner.

This is because attempting to standardize across a monorepo

inevitably means you can't find the "always right" one-size

fits-all solution and instead are fitting people into the iron

bed. The solution of course is better organizational

design rather than program design, namely to shatter the

monolith. This is also valuable at a certain firm scale

(dunbar's number again), as nobody can fit it all into their head

without resorting to public interfaces, SOA and so forth.

Reorientation to this approach is the textbook

example of short-term pain that brings long-term benefit,

and I've leveraged it multiple times to great effect in my career.

When preparing any tool which you see all the pieces readily available, but that nobody has executed upon, you begin to ask yourself why that is. This is essentially what I've been going through building the pairwise tool.

Every time I look around and don't see a solution for an

old problem on CPAN, my spider-senses start to fire. I saw

no N-dimensional combination methods (only n Choose k) or bin

covering algorithms, and when you see a lack of N-dimensional

solutions that usually means there is a lack of closed form

general solutions to that problem. While this is not true

for my problem space, it rubs right up against the edge of NP hard

problems. So it's not exactly shocking I didn't see anything

fit to purpose.

The idea behind pairwise

test execution is actually quite simple, but the constraints

of the software systems surrounding it risk making it more complex

than is manageable. This is because unless we confine ourselves to

a very specific set of constraints, we run into not one, but two

NP hard problems. We could then be forced into the unfortunate

situation where we have to use Polynomial time approximations.

I've run into this a few times in my career. Each time the team

grows disheartened as what the customer wants seems on the surface

to be impossible. I always remember that there is always a way to

win by cheating (more tight constraints). Even the tyranny of the

rocket equation was overcome through these means (let's put a

little rocket on a big one!)

The first problem is that N-Wise test choosing is simply a combination.

This results in far, far more platforms to test than is practical

once you get beyond 3 independent variables relevant to your

system under test. For example:

A combination with 3 sets containing 3, 5 and 8 will result in 3

* 5 * 8 = 120 systems under test! Adding in a fourth or fifth will

quickly bring you into the territory of thousands of systems to

test. While this is straightforward to accomplish these

days, it is quite expensive.

What we actually want is an expression of the pigeonhole

principle. We wish to build sets where every

element of each component set is seen at least once, as

this will cover everything with the minimum number of needed

systems under test. This preserves the practical purpose of

pairwise testing quite nicely.

In summary, we have a clique problem and a bin covering problem. This means that we have to build a number of bins from X number of sets each containing some amount of members. We then have to fill said bins with a bunch of tests in a way which will result in them being executed as fast as is possible.

Each bin we build will represent some system under test, and each set from which we build these bins a particular important attribute. For example, consider these sets:

A random selection will result in an optimal multi-dimensional "pairwise" set of systems under test:

The idea is to pick one of each of the set with the most members

and then pick from the remaining ones at the index of the current

pick from the big set modulo the smaller set's size. This is the

"weak" form of the Pigeonhole Principle in action, which is why it

is solved easily with the Chinese

remainder theorem.

You may have noticed that perhaps we are going too far with our constraints here. This brings in danger, as the "strong" general form of the pigeonhole principle means we are treading into the waters of Ramsey's (clique) problem. For example, if we drop either of these two assumptions we can derive from our sets:

We immediately descend into the realm of the NP hard problem.

This is because we are no longer a principal ideal domain and can

no longer cheat using the Chinese remainder theorem. In this

reality, we are solving the Anti-Clique

problem specifically, which is particularly nasty. Thankfully, we

can consider those two constraints to be quite realistic.

We will have to account for the fact that the variables are

actually not independent. You may have noticed that some of these

"optimal" configurations are not actually realistic. Many

Operating systems do not support various processor architectures

and software packages. Three of the configurations above are

currently invalid for at least one reason. Consider a

configuration object like so:

Can we throw away these configurations without simply

"re-rolling" the dice? Unfortunately, no. Not without

using the god

algorithm of computing every possible combination ahead of

time, and therefore already knowing the answer. As such our

final implementation looks like so:

This brings us to another unmentioned constraint: what happens if

a member of a set is incompatible with all members of another

set? It turns out accepting this is actually a significant

optimization, as we will end up never having to re-roll

an entire sequence. See the while loop above.

Another complication is the fact that we will have to randomize

the set order to achieve the goal of eventual coverage of every

possible combination. Given the intention of the tool is to run

decentralized and without a central oracle other than git,

we'll have to also have use a seed based upon it's current

state. The algorithm above does not implement this,

but it should be straightforward to add.

We at least have a solution to the problem of building the bins. So, we can move on to filling them. Here we will encounter trade-offs which are quite severe. If we wish to accurately reflect reality with our assumptions, we immediately stray into "no closed form solution" territory. This is the Fair Item Allocation problem, but with a significant twist. To take advantage of our available resources better, we should always execute at least one test. This will result in fewer iterations to run through every possible combination of systems to test, but also means we've cheated by adding a "double spend" on the low-end. Hooray cheating!

The fastest approximation is essentially to dole out a number of

tests equal to the floor of dividing the tests equally among the

bins plus floor( (tests % bins) / tests ) in

the case you have less tests than bins. This has an error which is

not significant until you reach millions of tests. We then get

eaten alive by rounding error due to flooring.

It is worth noting there is yet another minor optimization in our

production process here at the end, namely that if we have more

systems available for tests than tests to execute, we can achieve

total coverage in less iterations by repeating tests from earlier

groups.

Obviously the only realistic assumption here is #2. If tests can be executed faster by breaking them into smaller tests, the test authors should do so, not an argument builder.

Assumptions #1 and #3, if we take them seriously would not only doom us to solving an NP hard problem, but have a host of other practical issues. Knowing how long each test takes on each computer is quite a large sampling problem, though solvable eventually even using only git tags to store this data. Even then, #4 makes this an exercise in futility. We really have no choice but to accept this source of inefficiency in our production process.

Invalidating #5 does not bring us too much trouble. Since we expect to have a number of test hosts which will satisfy any given configuration from the optimal group and will know how many there are ahead of time, we can simply split the bin over the available hosts and re-run our bin packer over those hosts.

This will inevitably result in a situation where you have an

overabundance of available systems under test for some

configurations and a shortage of others. Given enough tests, this

can result in workflow disruptions. This is a hard problem to

solve without "throwing money at the problem", or being more

judicious with what configurations you support in the first place.

That is the sort of problem an organization wants to have though.

It is preferable to the problem of wasting money testing

everything on every configuration.

Since the name of the tool is pairwise, I may as well also

implement and discuss multi-set combinations. Building these

bins is actually quite straightforward, which is somewhat shocking

given every algorithm featured for doing pairwise testing at

pairwise.org was not in fact the optimal one from my 30 year old

combinatorics textbook. Pretty much all of them used

tail-call recursion in languages which do not optimize this, or

they took (good) shortcuts which prevented them from functioning

in N dimensions.

Essentially you build an iterator which, starting with the first

set, pushes a partial combination with every element of its set

matched with one of the second onto your stack.

You then repeat the process, considering the first set to be the

partial, and crank right through all the remaining sets.

Dealing with incompatibilities is essentially the same procedure

as above. The completed algorithm looks like so:

You may have noticed this is a greedy algorithm. If we

decided to use this as a way to generate a cache for a "god

algorithm" version of the anti-clique generator above, we could

very easily run into memory exhaustion with large enough

configuration sets, defeating the purpose. You could flush the

partials that are actually complete, but even then you'd only be

down to 1/n theoretical memory usage where n is the size of your

2nd largest configuration set (supposing you sort such that it's

encountered last). This may prove "good enough" in practice,

especially since users tend to tolerate delays in the "node added

to network" phase better than the "trying to run tests"

phase. It would also speed up the matching of available

systems under test to the desired configuration supersets, as we

could also "already know the answer".

Profiling this showed that I either had to fix my algorithm or

resort to this. My "worst case" example of 100 million tests

using the cliques() method took 3s, while generating everything

took 4. Profiling shows the inefficient parts are almost

100% my bin-covering.

Almost all of this time is spent splice()ing huge arrays of

tests. In fact, the vast majority of the time in my test

(20s total!) is simply building the sequence (1..100_000_000),

which we are using as a substitute for a similar length argument

array of tests.

We are in luck, as once again we have an optimization suggested

by the constraints of our execution environment. Given any

host only needs to know what it needs to execute we can

save only the relevant indices, and do lazy

evaluation. This means our sequence expansion (which

takes the most time) has an upper bound of how long it takes to

generate up to our offset. The change is

straightforward:

The question is, can we cheat even more by starting at our offset

too? Given we are expecting a glob or regex describing a

number of files which we don't know ahead of time what will be

produced, this seems unlikely. We could probably speed it up

globbing with GLOB_NOSORT. Practically

every other sieve trick we can try (see DeMorgan's

Laws) is already part of the C library implementing glob

itself. I suspect that we will have to understand the parity

problem a great deal better for optimal

seeking via search criteria.

Nevertheless, this gets our execution time for the cliques()

algorithm down to 10ms, and 3s as the upper bound to generate our

sequence isn't bad compared to how long it will take to execute

our subset of 100 million tests. We'd probably slow the

program down using a cached solution at this point, not to mention

having to deal with the problems inherent with such.

Generating all combinations as we'd have to do to build the cache

itself takes another 3s, and there's no reason to punish most

users just to handle truly extreme data sets.

It is possible we could optimize our check that a combination is

valid, and get a more reasonable execution time for combine() as

well. Here's our routine as a refresher:

Making the inner grep a List::Util::first instead seems obvious,

but the added overhead made it not worth it for the small data

set. Removing our guard on the other hand halved execution time,

so I have removed it in production. Who knew ref( ) was so

slow? Next, I "disengaged safety protocols" by turning off

warnings and killing the defined check. This made no

appreciable difference, so I still haven't yet run into a

situation where I've needed to turn off warnings in a tight

loop. Removing the unnecessary allocation of @compat and

returning directly shaved another 200ms. All told, I got

down to 800ms, which is in "detectable but barely" delay

territory, which is good enough in my book.

The thing I take away from all this is that the most useful thing

a mathematics education teaches is the ability to identify

specific problems as instances of generalized problems (to which a

great deal of thinking has already been devoted). While this

is not a new lesson, I continuously astonish myself how

unreasonably effective it is. That, and exposure to the wide

variety of pursuits in mathematics gives a leg up as to where to

start looking.

I also think the model I took developing this has real

strength. Developing a program while simultaneously doing

what amounts to a term paper on how it's to operate very clearly

draws out the constraints and acceptance criteria from a program

in an apriori way. It also makes documentation a fait

accompli. Making sure to test and profile while doing this

as well completed the (as best as is possible without users) methodologically

dual design, giving me the utmost confidence that this

program will be fit for purpose. Given most "technical debt"

is caused by not fully understanding the problem when going into

writing your program (which is so common it might shock the

uninitiated) and making sub-optimal trade-offs when designing it,

I think this approach mitigates most risks in that regard.

That said, it's a lot harder to think things through and then

test your hypotheses than just charging in like a bull in a china

shop or groping in the dark. This is the most common pattern

I see in practice doing software development professionally.

To be fair, it's not like people are actually willing to pay

for what it takes to achieve real quality, and "good enough" often

is. Bounded

rationality is the rule of the day, and our lot in life is

mostly that of a satisficer.

Optimal can be the enemy of good, and the tradeoffs we've made

here certainly prove this out.

When I was doing QA for a living people are surprised when I tell

them the most important book for testers to read is Administrative

Behavior. This is because you have to understand the

constraints of your environment do do your job well, which is to

provide actionable information to decision-makers. I'm

beginning to realize this actually suffuses the entire development

process from top to bottom.

Basically nothing about the response on social media to my prior post has shocked me.

The very first response was "this is a strawman". Duh. It should go without saying that everyone's perception of others can't be 100% accurate. I definitely get why some people put "Don't eat paint" warnings on their content, because apparently that's the default level of discourse online.

Much of the rest of the criticism is to confuse "don't be so nice" with "be a jerk". There are plenty of ways to politely insist on getting your needs met in life. Much of the frustrations Sawyer is experiencing with his interactions are to some degree self-inflicted. This is because he responds to far too much, unwittingly training irritating people to irritate him more.

This is the most common failure mode of "look how hard I tried". The harder you "try" to respond to everything, the worse it gets. Trust me, I learned this the hard way. If you instead ignore the irritating, they eventually "get the message" and slink off. It's a simple question: Would you rather be happy, or right? I need to be happy. I don't need other people to know I'm right.

I'm also not shocked that wading into drama / "red-meat" territory got me more engagement on a post than anything else I've got up here to date. This is just how things work online -- controversy of some kind is necessary. Yet another reason to stop being nice; goring someone's ox is just the kind of sacrifice needed to satiate the search engine gods, apparently.

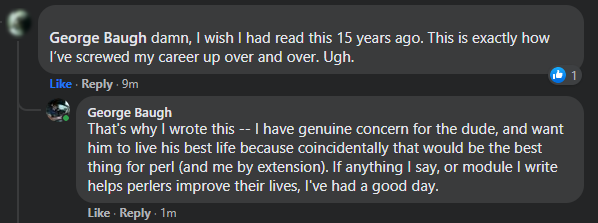

This is not to say I don't find it distasteful, indeed there is a reason I do not just chase this stuff with reckless abandon. What I want is to have a positive impact on the community at large, and I think I may just have done it (see the image with this post).

Even though I gored a few oxen-feels posting this, it's clearly made a positive impact on at least one person's life. That alone makes it worth it. I still take the scout's vow to do a good turn daily seriously. Keep stacking those bricks, friends.

SawyerX has resigned from the Perl 5 steering council. This is unfortunate for a variety of reasons, the worst of which is that it is essentially an unnecessary self-sabotage which won't achieve Sawyer anything productive.

I met Sawyer in a cafe in Riga during the last in-person EU Perl 5/6 con. Thankfully much of the discussion was of a technical nature, but of course the drama of the moment was brought up. Andrew Shitov, a Russian was culturally insensitive to westerners, go figure. He apologized and it blew over, but some people insisted on grinding an axe because they valued being outraged more than getting on with business.

It was pretty clear that Sawyer was siding with the outraged, but still wanted the show to go on. I had a feeling this (perceived) fence-sitting would win him no points, and observed this play out.

This discussion naturally segued into his experience with P5P, where much the same complaints as lead to his resignation were aired. At the time he was a pumpking, and I stated my opinion that he should just lead unrepentantly. I recall saying something to the effect of "What are you afraid of? That people would stop using perl? This is already happening." At the time it appears he was just frustrated enough to actually lead.

This lead to some of the most forward progress perl5 has had in a long time. For better or worse, the proto-PSC decided to move forward. At the time I felt cautiously optimistic because while his frustration was a powerful motivator, I felt that the underlying mental model causing his frustration would eventually torpedo his effort.

This has come to pass. The game he's playing out here unconsciously is called "look how hard I'm trying". It's part of the Nice Guy social toolkit. Essentially the worldview is a colossal covert contract: "If I try hard and don't offend anyone, everyone will love me!"

It's unsurprising that he's like this, as I've seen this almost everywhere in the software industry. I was like this once myself. Corporate is practically packed from bottom to top with "nice guys". This comes into conflict with the big wide world of perl, as many of the skilled perlers interested in the core language are entrepreneurs.

In our world, being nice gets you nowhere. It doesn't help you in corporate either, but corporate goes to great effort to forestall the cognitive dissonance which breaks people out of this mental model. The reason for this is straightforward. Studies have repeatedly shown those with agreeable personalities are paid less.

Anyways, this exposes "nice" people to rationally disagreeable and self-interested people. Fireworks ensue when their covert contract is not only broken, but laughed at. Which brings us to today, where Sawyer's frustration has pushed him into making a big mistake which he thinks (at some level, or he would not have done it) will get him what he wants.

It won't. Nobody cares how hard you worked to make it right. Those around you will "just say things" forever, and play what have you done for me lately on repeat until the end of time. Such is our lot as humans, and the first step in healing is to accept it.

Future people considering hiring Sawyer will not have a positive view of these actions. Rather than seeing the upright and sincere person exhausted by shenanigans that Sawyer sees in himself, they will see a person who cracked under pressure and that therefore can't be trusted for the big jobs.

I hate seeing fellow developers make some of the same mistakes I did earlier in life. Especially if the reason he cracked now has to do with other things going on in his personal life which none of us are or should be privy to. Many men come to the point where it's "Kill the nice guy, before he kills you". Let us hope the situation is not developing into anything that severe, so that he can right his ship and return to doing good work.

I'm borrowing the title of a famous post by patio11,

because I clearly hate having google juice because it's good and touches on similar points to my former colleague Mark Gardner recently made.

(See what I did there, cross site linking! Maybe I don't hate having google juice after all...)

Anyways, he mentioned that despite having a sprint fail, he still learned a lot of good stuff. This happens a lot as a software developer and you need to be aware of this to ensure you maximize your opportunities to take something positive away from everything you work on.